Final week, Google launched its new AI, or quite its new massive language mannequin, dubbed Gemini. The Gemini 1.0 mannequin is obtainable in three variations: Gemini Nano is meant to be greatest fitted to duties on a selected system, Gemini Professional is meant to be the most suitable choice for a wider vary of duties, and Gemini Extremely is Google’s largest language mannequin that can deal with essentially the most complicated duties you can provide it.

One thing that Google was eager to spotlight on the launch of Gemini Extremely was that the language mannequin outperformed the most recent model of OpenAI’s GPT-4 in 30 of the 32 mostly used assessments to measure the capabilities of language fashions. The assessments cowl all the things from studying comprehension and numerous math inquiries to writing code for Python and picture evaluation. In among the assessments, the distinction between the 2 AI fashions was only some tenths of a share level, whereas in others it was as much as ten share factors.

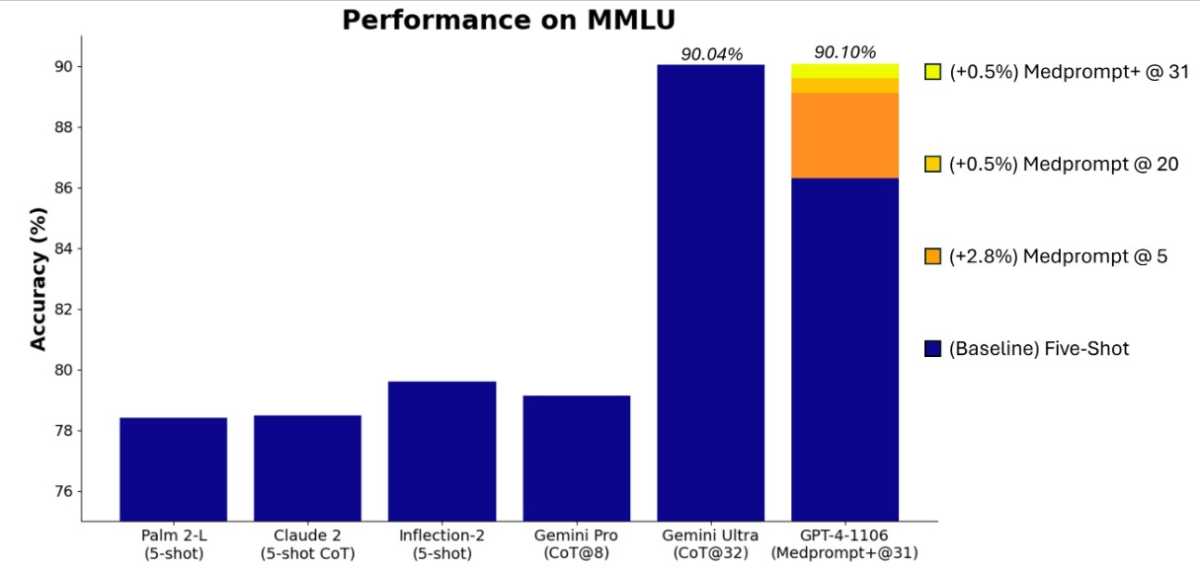

Maybe Gemini Extremely’s most spectacular achievement, nonetheless, is that it’s the first language mannequin to beat human consultants in large multitask language understanding (MMLU) assessments, the place Gemini Extremely and consultants had been confronted with problem-solving duties in 57 totally different fields, starting from math and physics to drugs, legislation, and ethics. Gemini Extremely managed to attain a rating of 90.0 p.c, whereas the human professional it was in comparison with “solely” scored 89.8 p.c.

The launch of Gemini can be gradual. Final week, Gemini Professional turned accessible to the general public, as Google’s chatbot Bard began utilizing a modified model of the language mannequin, and Gemini Nano is constructed into various totally different capabilities on Google’s Pixel 8 Professional smartphone. Gemini Extremely isn’t prepared for the general public but. Google says it’s nonetheless present process safety testing and is barely being shared with a handful of builders and companions, in addition to consultants in AI legal responsibility and safety. Nonetheless, the concept is to make Gemini Extremely accessible to the general public through Bard Superior when it launches early subsequent yr.

Microsoft has now countered Google’s claims that Gemini Extremely can beat GPT-4 by having GPT-4 run the identical assessments once more, however this time with barely modified prompts or inputs. Microsoft researchers revealed analysis in November on one thing they referred to as Medprompt, a mixture of totally different methods for feeding prompts into the language mannequin to get higher outcomes. You could have observed how the solutions you get out of ChatGPT or the pictures you get out of Bing’s picture creator are barely totally different if you change the wording a bit. That idea, however rather more superior, is the concept behind Medprompt.

Microsoft

By utilizing Medprompt, Microsoft managed to make GPT-4 carry out higher than Gemini Extremely on various the 30 assessments Google beforehand highlighted, together with the MMLU check, the place GPT-4 with Medprompt inputs managed to get a rating of 90.10 per cent. Which language mannequin will dominate sooner or later stays to be seen. The battle for the AI throne is much from over.

This text was translated from Swedish to English and initially appeared on pcforalla.se.